Claudia Wagner, David García, Mohsen Jadidi, Markus Strohmaier

Proceedings of the 9th International AAAI Conference on Weblogs and Social Media (2015)

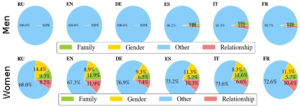

Wikipedia is a community-created encyclopedia that contains information about notable people from different countries, epochs and disciplines and aims to document the world’s knowledge from a neutral point of view. However, the narrow diversity of the Wikipedia editor community has the potential to introduce systemic biases such as gender biases into the content of Wikipedia. In this paper we aim to tackle a sub problem of this larger challenge by presenting and applying a computational method for assessing gender bias on Wikipedia along multiple dimensions. We find that while women on Wikipedia are covered and featured well in many Wikipedia language editions, the way women are portrayed starkly differs from the way men are portrayed. We hope our work contributes to increasing awareness about gender biases online, and in particular to raising attention to the different levels in which gender biases can manifest themselves on the web.

Wikipedia is a community-created encyclopedia that contains information about notable people from different countries, epochs and disciplines and aims to document the world’s knowledge from a neutral point of view. However, the narrow diversity of the Wikipedia editor community has the potential to introduce systemic biases such as gender biases into the content of Wikipedia. In this paper we aim to tackle a sub problem of this larger challenge by presenting and applying a computational method for assessing gender bias on Wikipedia along multiple dimensions. We find that while women on Wikipedia are covered and featured well in many Wikipedia language editions, the way women are portrayed starkly differs from the way men are portrayed. We hope our work contributes to increasing awareness about gender biases online, and in particular to raising attention to the different levels in which gender biases can manifest themselves on the web.

Links

Selected Media Response

- MIT Technology Review – Computational Linguistics Reveals How Wikipedia Articles Are Biased Against Women

- New York Times Arts Beat – MoMA to Host Wikipedia Editing Marathon, to Improve Coverage of Women in the Arts

- Wikipedia Admin article – Writing about women

- Wikipedia Signpost

- Motherboard Vice – Wikipedia’s Gender Problem Has Finally Been Quantified

- Fast Company – More Like Dude-ipedia: Study Shows Wikipedia’s Sexist Bias

- The Times – Wikipedia editors are accused of sexism

- Wired Germany – Neue Studie: Männer werden auf Wikipedia häufiger verlinkt als Frauen

- El Pais – La Wikipedia, ¿cosa de hombres?

- Spektrum: Liebling der Wissenschaft

- Le Temps – Wikipédia. Comment féminiser un village de Schtroumpfs